In this article, I will walk you through installing n8n and LLM locally. I have created video explaining how youtube video.

Lets get started.

Install Ollama

Ollama is a desktop app that allows you to install and run any open source model on your computer, simply go to https://ollama.com/download , choose your operating system then hit download.

Wait for it to install, then double click on the Installed Ollama.exe, follow the installation steps. Open the Ollama desktop.

You should see this interface, on the left you will see all of your chats. To start using it just select the desired model right below the input field.

Write your message then hit enter, it will automatically install the model and use it right way.

**Note : make sure you have the resources to use the model, if you have 16GB RAM (like me ) any model less then 3b parameters should work fine. If you have less I recommand gemma3:270m model .

Install n8n locally

The next thing we want to do is install n8n. To do so, we need to install Docker, go to https://docs.docker.com/desktop/setup/install/windows-install/ then click on Desktop for windows if you have windows, for mac go to https://docs.docker.com/desktop/setup/install/mac-install/

It may take a while depending on your internet. Double click on it after it installed, follow the instruction then open it.

Now after docker is installed, open your command prompt if you are in window or terminal if you are in mac, type the following command:

docker volume create n8n_data

then

docker run -it --rm --name n8n -p 5678:5678 -v n8n_data:/home/node/.n8n docker.n8n.io/n8nio/n8n

this may take a while as it load all the source code and the packages necessary to run n8n. You should see something like this at the bottom.

copy the url, in this case 'http://localhost:5678' then open new tab and past the url.

signup and login with your account and start building workflows.

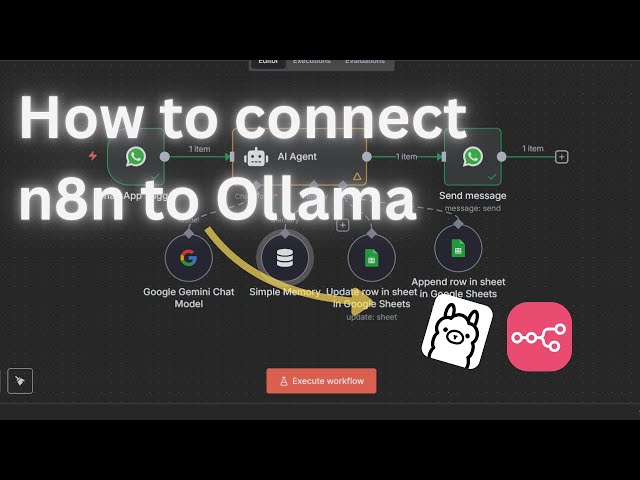

Connect n8n to Ollama

After you have installed Ollama and n8n, lets connect Ollama to n8n, start by creating a simple chat trigger,followed by AI Agent with Chat Model "Ollama Chat Model".

On the credentials of Ollama Chat Model add new credentials, you will need base_url, to find it go to Ollama destop > settings , make sure "Expose Ollama to the network" is enabled.

Now you can just add http://localhost:5678/ to the base_url however, n8n runs on docker container, so we will need a local ip address, to find your local address, go to the command prompt, type the following:

On Window

ipconfig

On Mac os

ifconfig

Copy the Ip address right to Ipv4 then past it on base_url plus the port, like below:

note: this is not public IP Address but rather a local one so dont worry about it.

Click test and it should turn green. Now you can select the model you have installed , it should pop up on the dropdown menu.

If you didnt see any model just go to http://localhost:11434/v1/models, you should see all the installed model, copy the model id and past it on the Model input field in n8n.

Now you are ready to create workflow for free and without internet access.